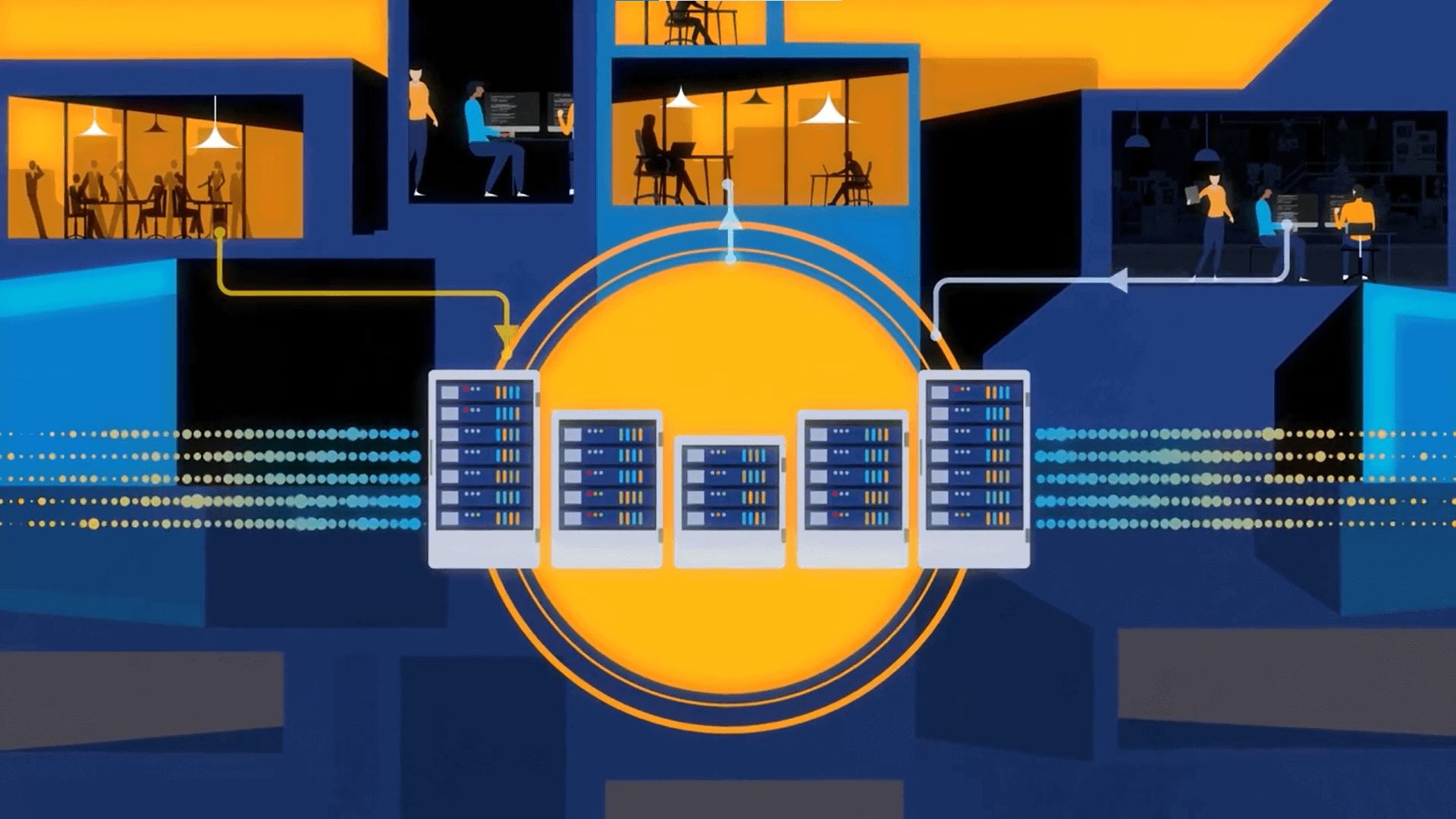

“Edge processing means that we replicate processing and data storage that’s close to the source.”

It’s been a common problem for years: If you gather large amounts data from a device or other source, and you need to process that data instantly, then moving that data to a centralized database each and every time introduces latency.

IoT brings this issue up again. For example, say that there is a machine on a factory floor that analyzes the quality of an auto part that it makes. If the part is not up to quality, as determined by an optical scanner, then it’s automatically rejected. While this keeps a human from looking at the part, and thus slowing down the process, it also takes a great deal of time to transmit the data and image back to the centralized database and compute engine, where a determination is made as to the success of the manufacturing process, and then communicated back to the machine.

The cloud complicates this process even more. Instead of sending the data back to the data center, we send it to a remote server that can be thousands of miles away, and, to make things worse, we send it over the open Internet. However, considering the amount of processing that needs to occur, the cloud may be the best bang for the buck.

So, to solve this problem, many suggest “computing at the edge.” It’s not a new concept, but it’s something that was recently modernized. Computing at the edge pushes most of the data processes out to the edge of the network, close to the source. Then it’s a matter of dividing the processing between data and processing at the edge, versus data and processing in the centralized system.

The concept is to process the data that needs to quickly return to the device, in this case, the pass/fail data that indicates the success or failure of the physical manufacturing of the auto part. However, the data should also be centrally stored, and, ultimately, all of the data is sent back to the centralized system, cloud or not, for permanent storage and for future processing.

Thus, edge processing means that we replicate processing and data storage that’s close to the source, but it’s more of a master/slave type of architecture, where the centralized system ultimately becomes the point of storage for all of the data, and the edge processing is merely a node of the centralized system.

We need to think a bit harder about how to build our IoT systems, and that means more money and time must go into the development processes. However, the performance that well designed IoT systems will provide to meet the real-time needs of IoT will more than justify the added complexity.

I suspect that computing at the edge architecture will become more popular as IoT becomes more popular. Indeed, we’ll get better at it, and purpose-built technologies will start to appear. Computing at the edge of an IoT architecture is something that should be on your radar, if IoT is in your future.