The new offerings aim to help businesses modernize data management making it available to applications in real-time and manage data as a true business asset.

TIBCO Software today launched an initiative to accelerate the adoption of real-time analytics applications at a time when many organizations are accelerating digital business transformation initiatives to mitigate the impact of the economic downturn brought on by the COVID-19 pandemic.

Announced at an online TIBCO NOW 2020 conference, a TIBCO Cloud Data Streams offering combined with the latest edition of the TIBCO Spotfire analytics application is at the core of a TIBCO Hyperconverged Analytics platform that makes it possible to collect and analyze data in real-time.

See also: TIBCO Software Shares COVID-19 Analytics

At the same time, an existing TIBCO Responsive Application Mesh blueprint is being extended to make it simpler to achieve that goal, says TIBCO CTO Nelson Petracek.

At the core of that framework are a bevy of updates to existing TIBCO offerings, including a Big Basin update to TIBCO Cloud Integration that adds support for robotic process automation (RPA) capabilities alongside a revamped user interface.

TIBCO has also added TIBCO Cloud Mesh, which makes it simpler for IT teams to create and discover, for example. application programming interfaces (APIs) and integrations in TIBCO Cloud. The company has also updated TIBCO BusinessEvents to provide more contextual processing of events in real-time via integrations with open source Apache Kafka, Apache Cassandra, and Apache Ignite frameworks and databases.

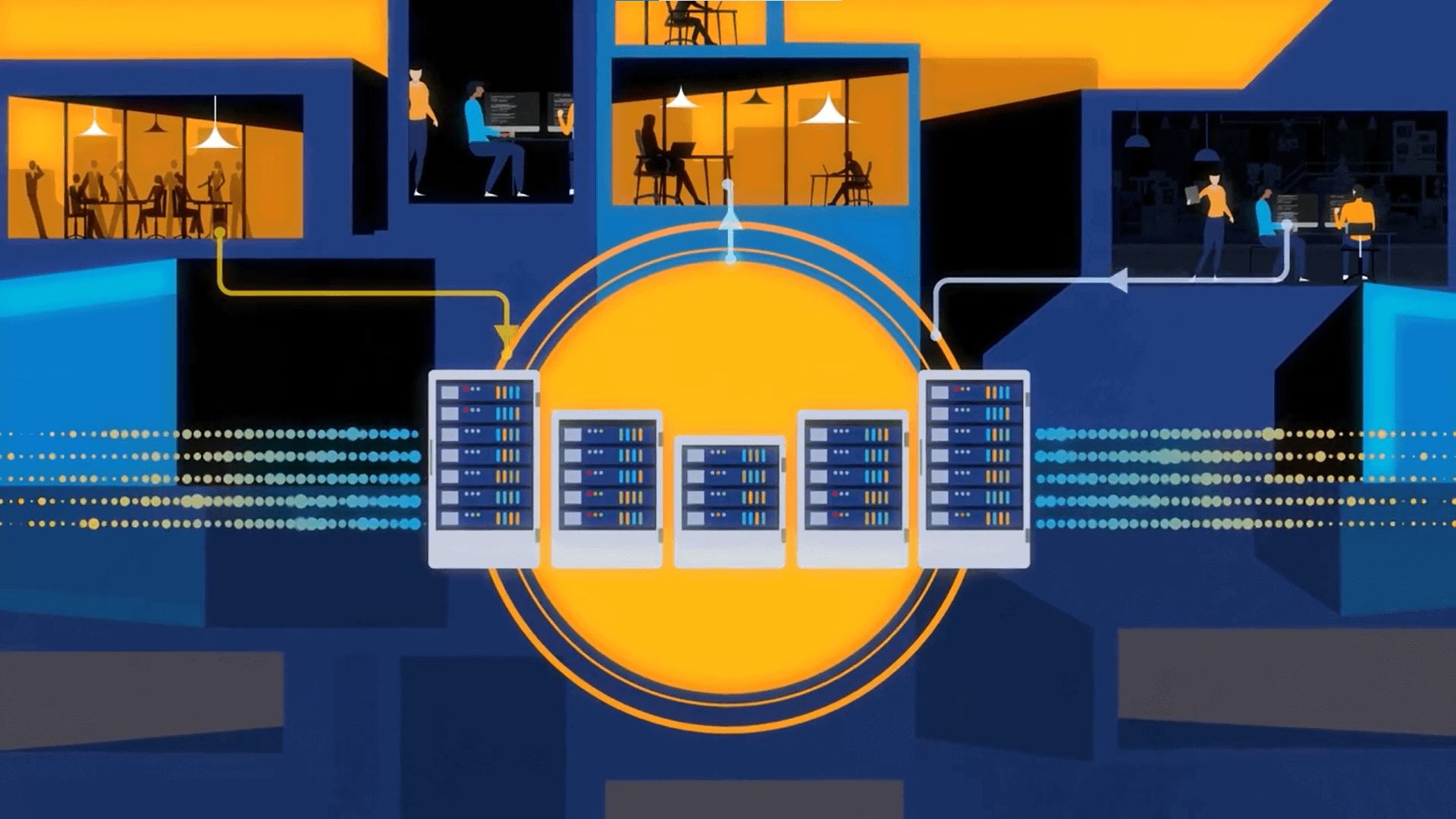

Finally, TIBCO revealed that TIBCO Business Process Management (BPM) Enterprise can now be deployed using containers and launched TIBCO Any Data Hub, a data management blueprint based on TIBCO Data Virtualization software that can be connected to more than 300 data sources.

“Data virtualization has emerged as a critical lynchpin for accelerating digital business transformation,” says Nelson Petracek, TIBCO CTO. In an ideal world, organizations would centralize all their data within a data lake. However, building data lakes takes time that many organizations don’t have as they race to reengineer business processes, notes Petracek. Data virtualization tools enable applications to access data without having to move it, which Petracek says enables IT teams to deploy applications capable of accessing data anywhere it happens to reside faster.

“Data virtualization is at the core of those initiatives,” says Petracek.

Organizations as they build and deploy these applications are also trying to move beyond batch-oriented processing to provide more responsive application experiences, notes Petracek. While there is currently a lot of focus on application development, Petracek says it’s also becoming apparent that the way data is managed needs to be modernized as well to make data available to applications in real-time.

Ultimately, organizations are finally moving toward managing their data as a true business asset, notes Petracek. The issue is determining what data has the most business value, which in turn drives the digital business processes around which the organization operates, says Petracek.

It may be a while before organizations modernize data management across the entire enterprise. Data virtualization tools, however, clearly have a role to play in jumpstarting that process. The challenge now is figuring out first what data is required to drive a process and then rationalizing all the conflicting data that today resides in far too many application silos.