As Oracle recounts, Apache Spark excels at running machine learning queries on massive data sets.

Predicting consumer behavior is considered the holy grail of marketing, but a classic problem is filtering out the noise from customers who are ready to buy.

As a data science goal, it’s a classic “needle-in-the-haystack” exercise. Web activity such as search and browsing may generate petabytes of data, then there’s past-purchase history and offline behavior such as in-store purchases. Then there’s reams of demographic data to analyze — age, income, affinities — in search of the coveted target market.

So how do you find the buyer in the haystack?

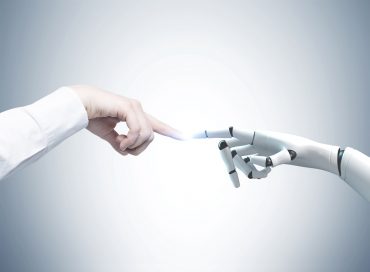

Alexander Sadovsky, director of data science at Oracle, runs a team responsible for crunching data on audience behavior and advertising across different channels, whether Google, Facebook, the wider internet, or in stores. After analyzing such data, Oracle offers advertisers something akin to a real-time target marketing list, where machine learning already has determined which customers are most likely to buy the advertiser’s products.

“What we’re looking for with machine learning is what is the fingerprint of the buyer of a product, from past behavior to future behavior,” he said.

Sadovsky gave the example of predicting a hotel reservation. A system might look at the behavior of individuals who previously booked the room to derive a predictive model of customers that might do the same in the future. A model may also want to target people of certain income ranges and certain affinities for products (soccer moms, young men, retirees — you name it).

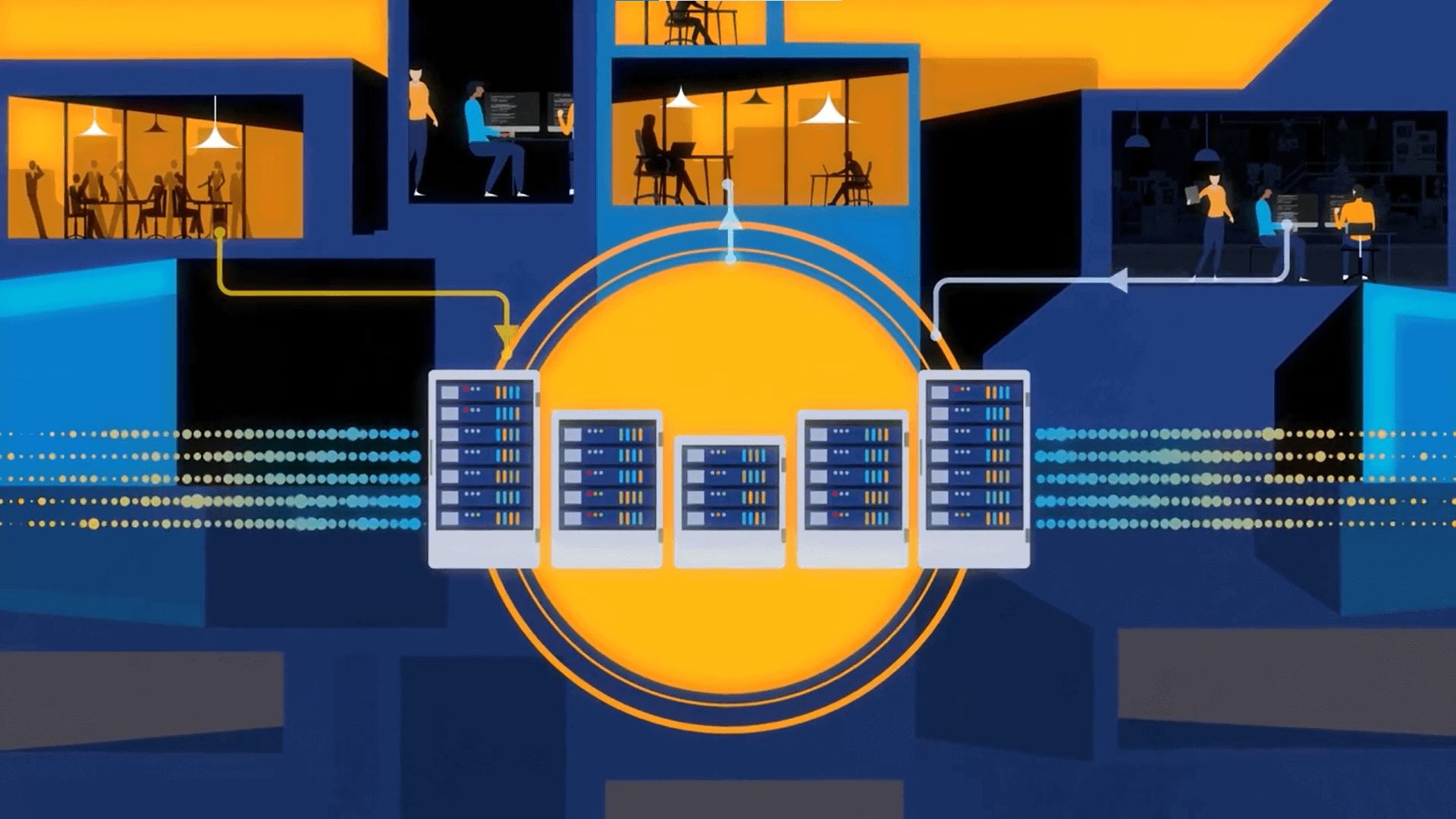

Why data infrastructure is key for predictive analytics

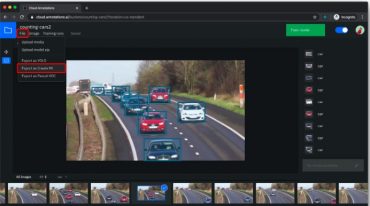

Because consumer behavior data is on the scale of petabytes, analytic queries can tax data stores. Sadovsky helped solve that problem by moving Oracle Data Cloud from an on-premise single machine for data processing to cloud-based Apache Hive and ultimately Apache Spark, a cluster-computing framework for fast data processing with built-in libraries for machine learning.

“We moved to Spark because we wanted to let the data drive the story, and in order to do that we need the most efficient technology that can use the most data as possible,” Sadovksy said. Secondly, in the advertising world, online behavior can change quickly, and the use of Spark offered an edge in being able to analyze that fast-changing data and reach target consumers.

“So that means we get quicker turnaround for our customers,” he said. “If they’re looking for a specific audience, we get that analysis to them quicker.” Moving to the Spark-based Oracle Data Cloud improved the speed of analytic processes by 300%, he said.

Spark and machine learning: a data scientist’s dreamland

Spark is also well-suited for running machine-learning queries in parallel, and Sadovsky’s team will run different algorithms to see which one is best at solving the problem. That’s useful in almost any context where pattern detection is key, such as detecting fraud or predictive maintenance.

In fact, a recent Syncsort report shows that 70 percent of IT managers and BI analysts are interested in Spark over MapReduce.

In the case of consumer behavior, because consumers are shopping for different products and exhibit varying behaviors, multiple algorithms are run to find the one best-suited to tackle a predictive task, and that’s where Apache Spark excels, Sadovsky said.

“With machine learning, one size doesn’t fit all,” he said.

Sadovsky will be speaking more on the subject at the Data Platforms 2017 conference held May 24 – 26.