There is still a gap between the data and choosing the best course of action.

Gartner recently predicted that more than 40% of data science tasks will be automated by 2020. However, most predictions such as Gartner’s ignore key components of data science. Certainly, one of the primary functions of data science is to choose an appropriate predictive model based on parsing and analyzing massive data sets.

Algorithms, and the machines that deploy them, are efficient at mathematically driven objectives. However, they cannot make decisions for humans, and they are still very poor at the semantics of language – although many tech companies are fervently laboring to change that.

Devavarat Shah, co-director of MIT’s Professional Education data science course, concurs that data scientists “must prepare by moving beyond the details of infrastructure implementation and begin to focus on how to turn data into decisions.” As such, data scientists will still need to obtain, maintain, and persistently improve on the skill of processing giant data sets through a thorough understanding and application of:

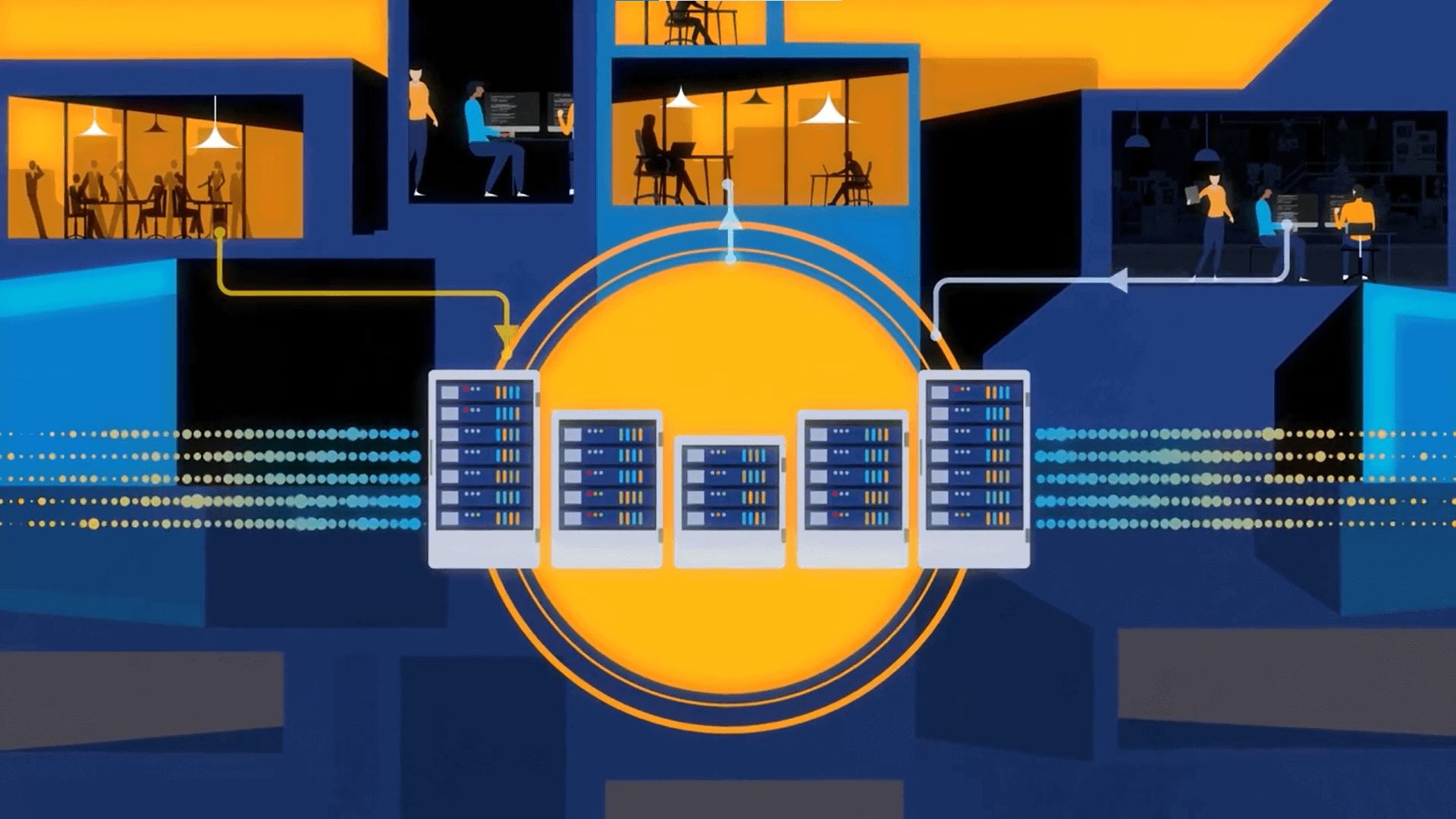

- Database infrastructure and processing

- Scripting languages: Python, etc.

- Statistical computation: R, etc.

- Machine learning

- Experimental design

- Bayesian inferencing

- Data visualization

- Data storytelling

- Data psychology

Data psychology and decision making

Most who follow the buzz about data science have not seen “data psychology” on a list of data science skills. A key principle of data psychology is that human beings can’t correctly assume that being human automatically translates into making accurate decisions. The decisions that data scientists – or any human being – make largely depend on their internal psychology. When humans make a decision, their thought process is connected to their individual perception of the best course of action. This process of moving from experience (or data) to a decision is known as inferencing.

While the world of statistics has mathematical controls for reducing bias and ensuring that “no unconscious arbitrary assumptions have been introduced,” there is still a gap between the data and choosing the best course of action. Add to this that humans continue to control the “teaching” of machines through the use of programming languages – which are not completely free from bias – and there is a ghost in the machine that data scientists can leverage: metacognition.

Metacognition is not a simple “task.” There is constant subconscious and unconscious cognitive activity that takes place while human beings are going about their work. Data reveals this aspect, but it takes some digging both externally as well as internally to unveil those depths.

Some experts believe that business intelligence analysts will evolve into “exception handlers” for a large swath of decisions made by machines (see “A Business Intelligence Strategy for Real-Time Analytics.”) Efficient machines may be able to assume some cognitive tasks; however, much data continues to be human-oriented. Businesses aren’t constructed to serve machines; most are focused on capturing human consumers. As such, for the time being, only humans have the capability to understand other human beings thoroughly.

The hard skill set required by data scientists is more easily obtained than the soft skills that only a human can possess. Businesses can either train data scientists in-house for the hard skills or seek out established data scientists with the ideal programming and mathematical experience. Machines will be able to assume many of those functions eventually. However, we are still a long way from having the capability to translate the vast human experience that is intimately connected with being human.