LinkedIn implemented Apache Kafka to handle real-time data feeds and constructed “Gobblin,” a data integration and ingestion framework.

Several years ago, LinkedIn recognized the crucial aspect of data for robust measurement of consumer activity. But the organization had to find a way to unify its data ecosystem so that internal users could leverage data to its fullest extent. Shrikanth Shankar, LinkedIn’s director of engineering and data analytics infrastructure, shared how the company did that at the 2017 Qubole Data Platforms conference.

During the process of merging various user pipelines, Shankar explained, LinkedIn discovered that back-end users such as data scientists were deploying different pipelines tailored to their specific business needs. For example, the data science team would build a custom query with the goal of pulling historical and real-time consumer data, with the objective of testing a machine learning model for comparing and predicting user activity over time. Meanwhile, data analysts had a separate pipeline for pulling the same data for the marketing department.

LinkedIn data: taming the monster

Not only was this a messy and time-intensive way for the data engineering team to work, but it also often produced inconsistent results due to the high variability of the data. At the time, LinkedIn had roughly 400 million registered members – a number that has grown to close to 2 billion monthly users today. The user interface was continuously being updated to keep pace, and the back-end adjustments to schemas had to shift with the changing consumer interface.

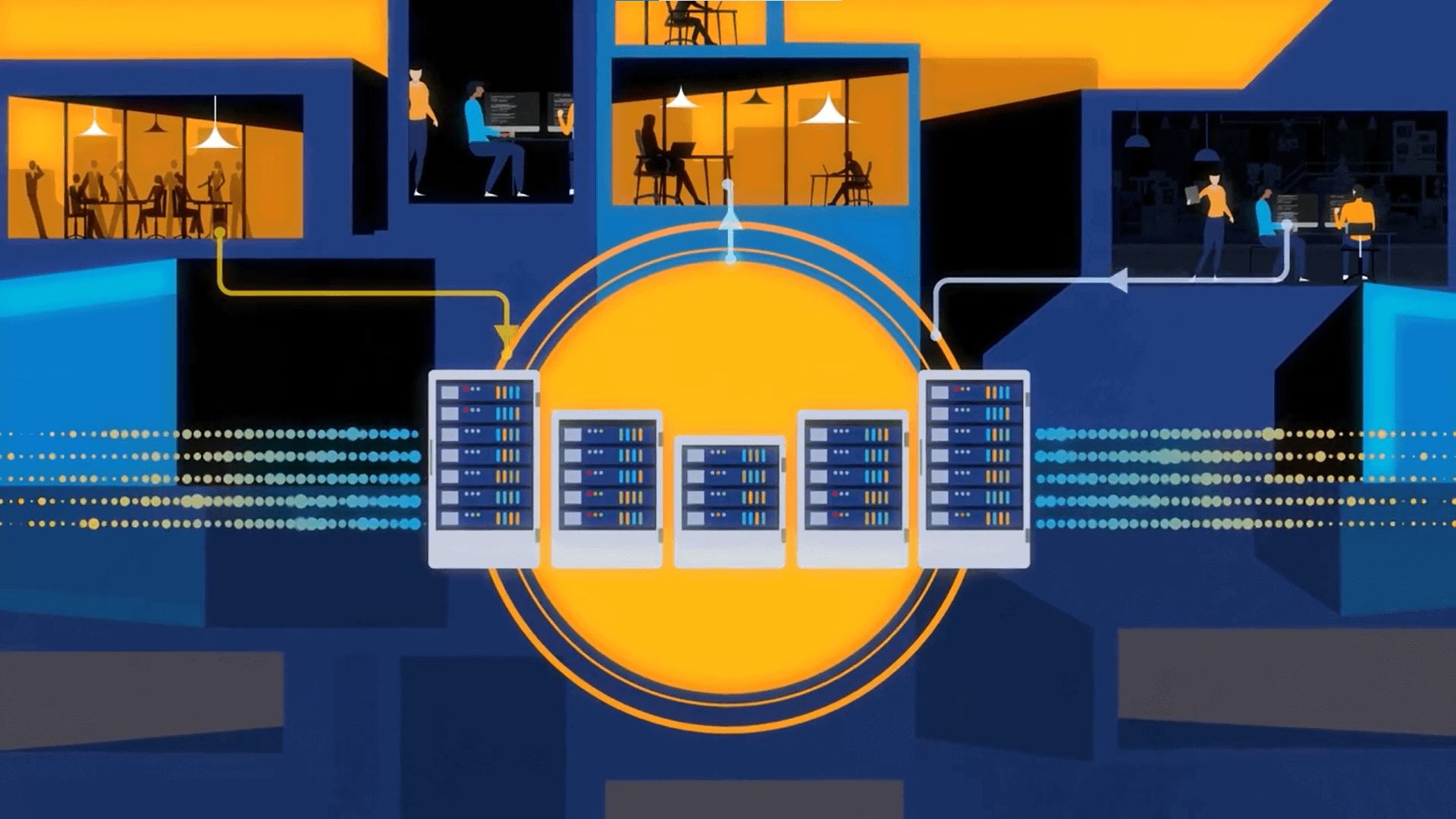

To streamline the pipelines and create a central pipeline transport, LinkedIn implemented Apache Kafka to handle real-time data feeds. The team also constructed “Gobblin” (because it was “gobbling in” all of LinkedIn’s data), an integration ingestion framework that pulled data from the various internal and external sources unique to LinkedIn. This helped to clean up the pipeline congestion and provided a platform for faster access to data and the requisite speedy analytics which translated into quicker insight capabilities for all teams.

But even if a company has the infrastructure in place to manage and derive insight from multiple data inputs, it still needs a supporting data-driven culture. This is why LinkedIn has both centralized and sub-groupings of its data team. For example, an analytics team may work with product development personnel, while data scientists might collaborate with marketing to A/B test an email campaign using keywords that trigger certain behavioral responses from LinkedIn users.

Lessons learned from LinkedIn

Regardless of the size of your organization, Shrikanth and the LinkedIn use case provides several key takeaways:

- There is an achievable balance between democratizing data by making it available and tailoring accessibility to specific employee needs.

- A contingency of data democratization is creating a unified ingestion framework, so all data is collected in one place.

- Big data success is reliant upon the establishment of a data team that is both centralized as well as sub-grouped to collaborate with other departments for data access and analytics.

The process of unifying a data ecosystem takes time and expertise. Given the open source movement, there are plenty of tools to assist companies in establishing the right infrastructure. Out-of-the-box and automated solutions are also an option, many of which can be found through the enterprise partners of the large cloud-based and web services platforms. (Qubole’s take on the above is that complexity can be overcome with automation.)

As for creating the data-driven culture, careful planning and team development are the cornerstones for ensuring the success of the all-important human element in the big data matrix.