In today’s fast-paced digital landscape, businesses are constantly seeking ways to stay competitive and meet the evolving needs of their customers. Modern applications are revolutionizing how users interact with digital products and services, offering a seamless and consistent experience while also providing tangible benefits for businesses in terms of reach, engagement, and cost-effectiveness.

Many companies are adopting a microservices approach to application development – breaking down the overall functionality of an application into several more granular services that can be developed, deployed, and scaled independently. Each service focuses on a specific capability and communicates with other services to achieve a broader objective. This pattern is designed to reduce deployment time, increase developer productivity, and maximize resource utilization efficiency.

When compared to the development of more monolithic applications in the past, the microservices technique of decoupling application components introduces some new requirements around handling connectivity and integration within one logical application. It can also create some organizational challenges around team collaboration, especially when multiple development groups are contributing to a single application. It’s worth exploring the role that events and event-driven architectures can play in addressing these requirements and challenges so that the benefits of modern application architectures can truly be realized.

See also: Why Event-Driven is More Than Just Kafka

Connecting Microservices Using Events

Microservices need to communicate with each other, exchange data, or initiate tasks. Events are a great way to enable this loose coupling, with an event broker or event store providing an intermediary between microservices to make requests or retrieve data.

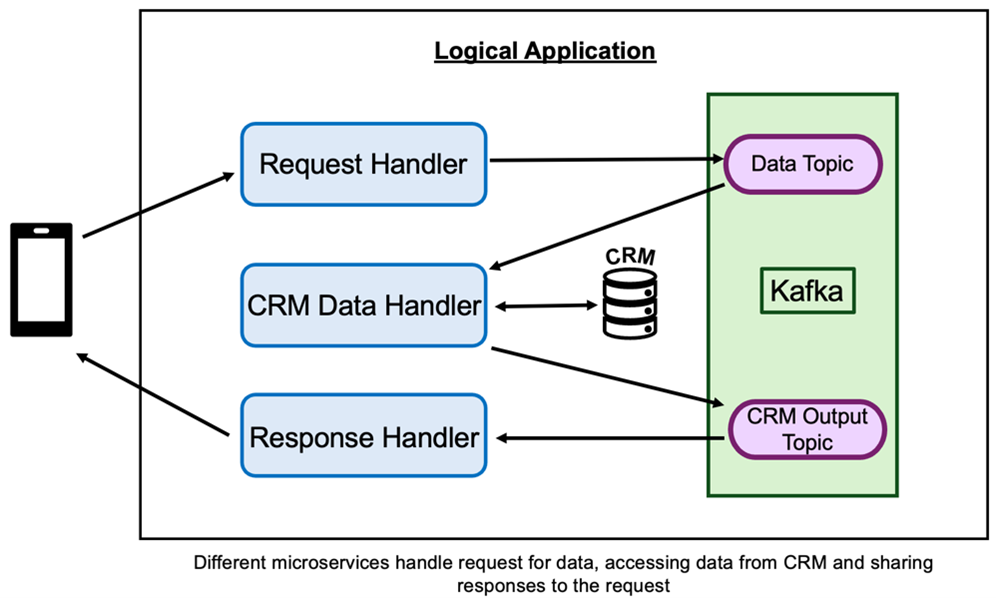

Let’s take an example where one part of an application is responsible for accessing customer data and provides it to other parts of the application. The “data retrieval” microservice must process data requests from other microservices, retrieve the data, and make it available as needed. The customer data itself resides in a third-party CRM system outside the application itself. Using an event-driven approach, other microservices send events to request particular customer details. The data retrieval microservice processes these (by querying the external CRM) and provides the output as events that are consumed asynchronously. This ensures the end-to-end activity is loosely coupled and the components can operate independently.

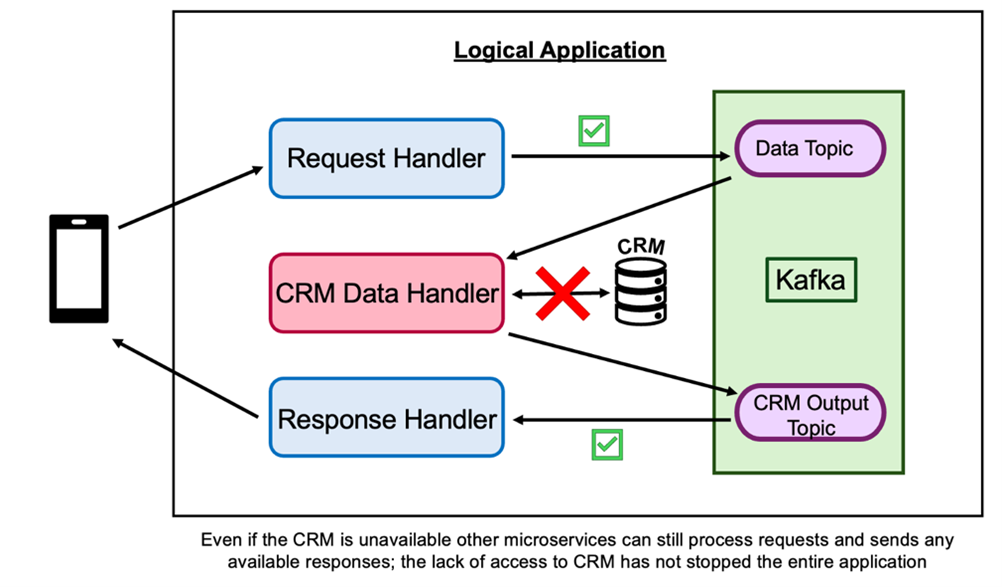

Imagine an alternative where the requests between microservices are made synchronously, for example, via REST API calls. In a situation where the CRM is temporarily unavailable, the data retrieval microservice can no longer perform its task. In addition, other microservices making requests either have to wait or try again later, which takes their focus away from performing other work. By consuming output as events instead, the microservices requesting customer data can continue to work, even making further requests ready for processing as soon as the CRM becomes available again.

Here, events are providing more resilience to failures because components can operate independently. If one component fails, it doesn’t necessarily bring down the entire system.

Using Events to Scale to Meet Varying Workloads

Events also enable better scalability by allowing different parts of an application to scale based on demand. As the number of events increases or decreases for a particular microservice to process, that component can be scaled up or down accordingly. With each microservice tasked with processing a certain set of events, it’s easy to identify pinch points and react accordingly. Let’s imagine we start to see substantial consumer lag for a particular topic, indicating that one of our microservices is falling behind. Additional instances of that microservice can be deployed automatically to cope with the increased demand without unnecessarily changing the capacity of other microservices that are part of the same application.

Supporting this approach is serverless computing, which abstracts away infrastructure management and enables code to be flexibly deployed in response to events, ensuring that only the compute resources needed to cope with current demand are activated, enabling more efficient use of resources.

Event-Based Interfaces to Enable Collaboration Between Teams

APIs enable developers to collaborate effectively, helping them to build on top of existing solutions and share their work with others. By using good documentation and open standards such as OpenAPI, they can effectively and consistently communicate the services provided by their application, including how to make a request and what to expect as output. When multiple teams work together to build an application consisting of multiple microservices, APIs also help establish a contract between those teams. This facilitates a smoother integration phase as the components of an application are brought together.

As events are used as the connectivity between microservices, these same needs exist and can be satisfied by formalizing event-based interfaces in the same way as APIs. Emerging standards like AsyncAPI enable events to be described in a standard way, capturing details about the event source, format, and payload to be expected. Events can be shared in developer portals, making it easy for application developers in different teams to share the interfaces they are creating, as well as discover the interfaces being made available by other teams in their organization. This helps avoid duplication of efforts and encourages reuse of existing events.

In the dynamic landscape of IT management, navigating the complexities of modern application development is paramount. For IT managers seeking to streamline governance and access control, Event interfaces can be governed via the use of Event gateways. These provide a centralized point for managing access. They can enforce control policies, for example, to ensure that only authorized applications have access, to manage fair usage, or to redact sensitive information prior to sharing.

Processing Events to Build Responsive Applications

One of the benefits of building modern applications using events is to enable greater responsiveness. Events are typically processed in near real-time, allowing applications to respond quickly to changes or input. Cutting-edge applications often require real-time processing to provide users with timely information and improve overall user experience.

Many of these applications are less concerned with individual events and more with the context in which they occur. It may be a combination of events or an event pattern or trend that provides the real insight that an application can then act on. An event processing capability might be provided as one of the microservices within an application, refining the raw events detecting situations or anomalies and then triggering other microservices to act. This might be to drive a business process or workflow, apply business rules to make an instant decision, or as input to a digital assistant.

Back to our example, let’s say one of the microservices retrieving customer data is processing a customer complaint event and needs the information to reach out to the customer using their contact preferences. In addition, that microservice sends the complaint event to an event processing service that aggregates the number of complaints over the past week. If the output indicates that complaint volumes have significantly increased above normal levels, additional actions can be triggered, for instance, initiating a “service at risk” process for the company’s operations team to investigate. Triggering this using events means they have a chance to mitigate ongoing impacts at the earliest opportunity, which would not have been possible without the context provided by real-time event processing.